GPT-5.3-Codex-Spark and AI coding addiction

OpenAI announced the release of their new coding model GPT-5.3-Codex-Spark today, only a week after the release of GPT-5.3-Codex. They say that it has been designed for real-time coding capable of serving more than 1,000 tokens per second. Real-time coding here means to see the results of your requested changes immediately by getting near-instant responses. It runs on Cerebras for high-speed inference.

When I read ‘ultra-fast model’, I first thought of Fast mode for Opus 4.6 in Claude Code. But the primary difference is that Fast mode is the same model with different API configuration that prioritizes speed over cost. Codex-Spark is a different model with a drop in quality and capabilities.

Also interesting to note that the reduced latency is not just due to the improved model speed, but also because of improvements made to the harness itself:

“As we trained Codex-Spark, it became apparent that model speed was just part of the equation for real-time collaboration—we also needed to reduce latency across the full request-response pipeline. We implemented end-to-end latency improvements in our harness that will benefit all models […] Through the introduction of a persistent WebSocket connection and targeted optimizations inside of Responses API, we reduced overhead per client/server roundtrip by 80%, per-token overhead by 30%, and time-to-first-token by 50%. The WebSocket path is enabled for Codex-Spark by default and will become the default for all models soon.”

I wonder if all other harnesses (Claude Code, OpenCode, Cursor etc.,) can make similar improvements to reduce latency. I’ve been vibe coding (or doing agentic engineering) with Claude Code a lot for the last few days and I’ve had some tasks take as long as 30 minutes.

Shift towards speed

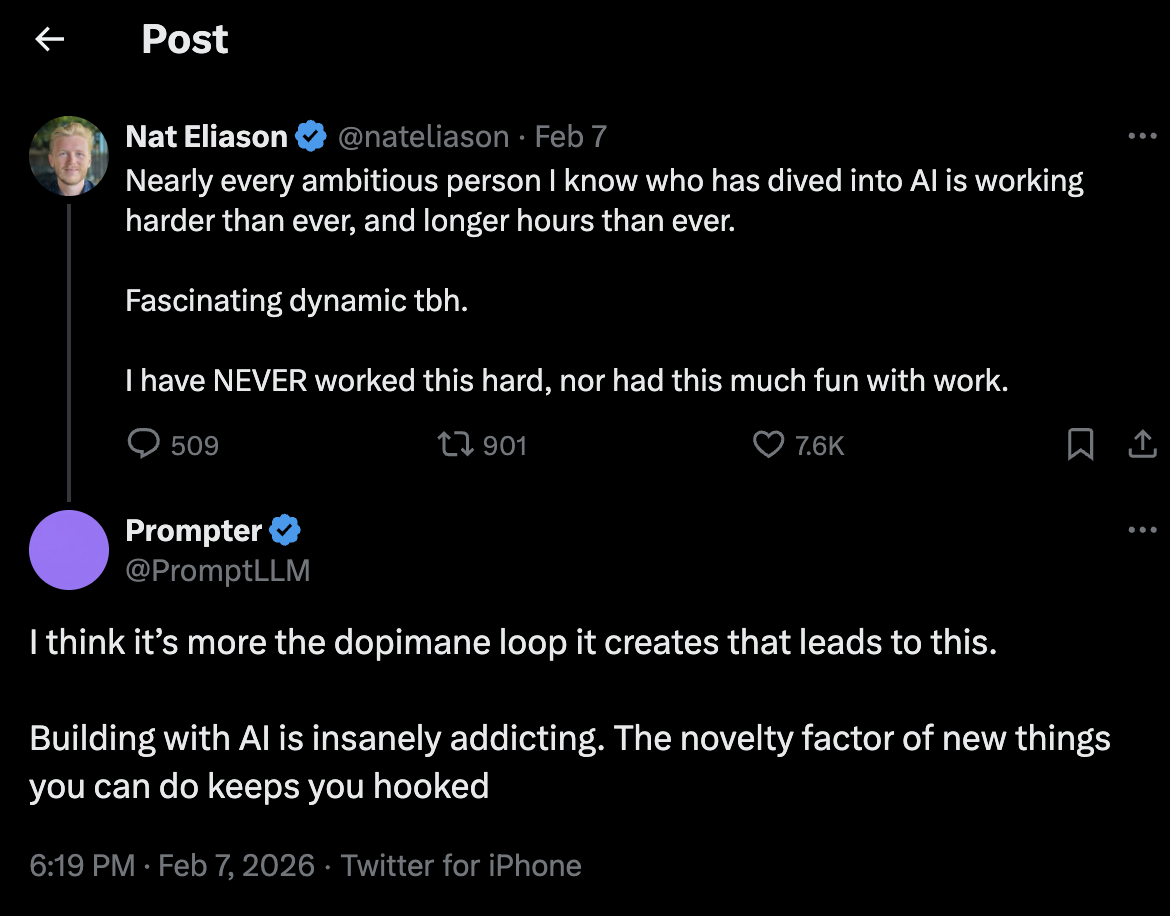

The idea of vibe coding itself is very addicting (aka Claudoholic). Once you get to see what these models are capable of, there’s no going back to writing code by hand. The feedback loop is also very quick - you ask for a change, it does its thing and you see the output. Seeing your idea come to life by simply prompting in natural language is very exciting. I have been staying awake past bedtime this week because I couldn’t stop telling myself “just one more prompt”. This tweet summarizes this feeling well:

Now imagine how much more addicting it would be with near instant responses. Even without such speeds, I’m already tempted to buy multiple subscriptions of Claude so that I can keep going if I hit limits.

I think most regular users are happy with the response times as they are, but I do think we will also see ultra-fast models coming out of Anthropic and DeepMind very soon.